Quick scan before the full breakdown.

Goal

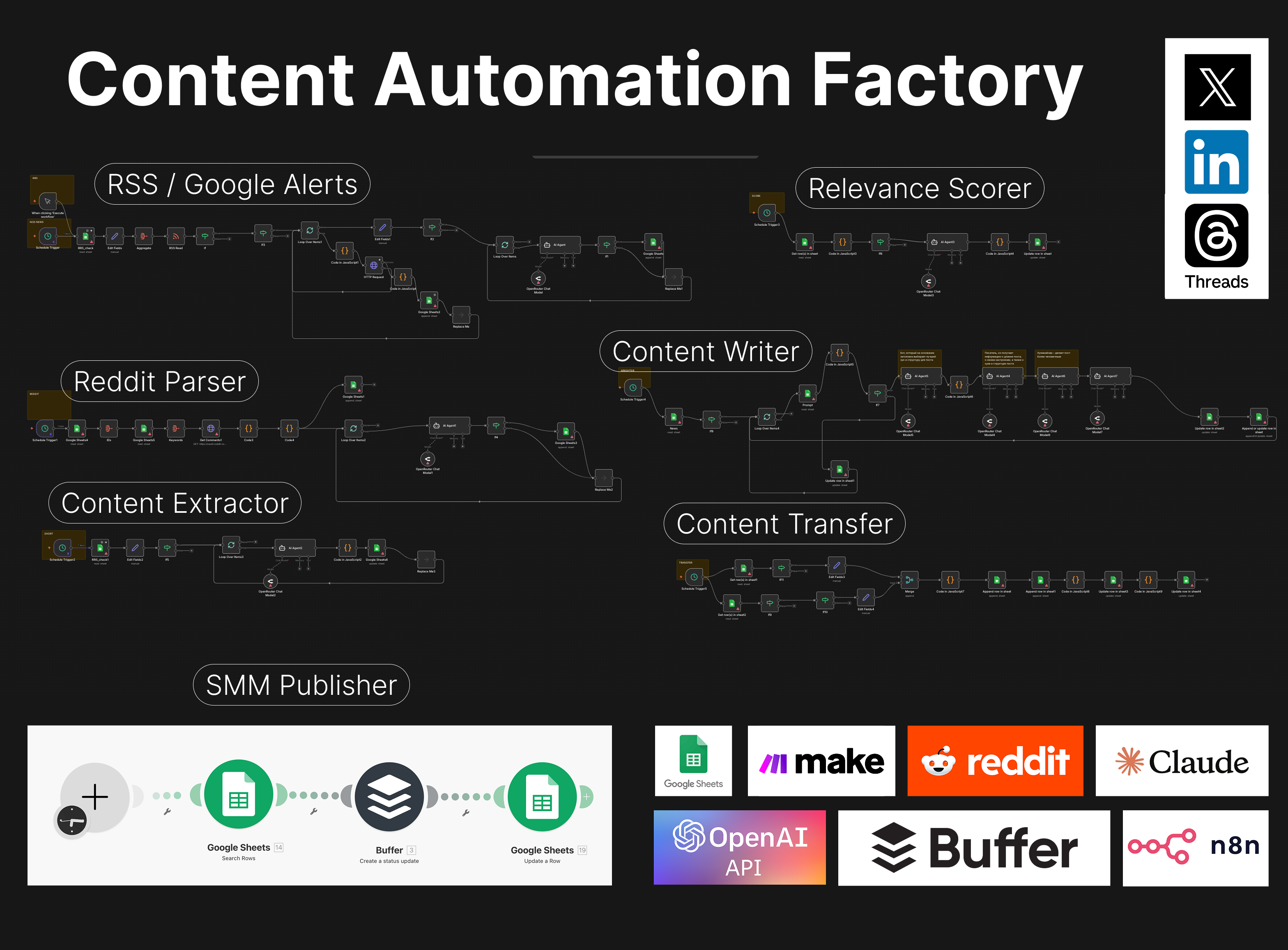

Automate AI news monitoring, scoring, and draft creation for LinkedIn, X, and Threads

Stack

n8n, OpenAI, Claude, Google Sheets, Make, Buffer, Telegram

Result

Reliable weekly content pipeline with only high-scoring drafts reaching the final posting table

Time saved

At least 7 hours per week saved on research, summarizing, and first-draft writing

My role: System Architect & Builder

Built an end-to-end content automation system in n8n that monitors fresh business news from RSS feeds and Reddit, filters relevant stories using keyword logic and low-cost LLM checks, parses full articles, extracts key facts, scores relevance, generates draft posts, and transfers only high-scoring results into a final review table.

The system integrates OpenAI, Claude, Google Sheets, Make, Buffer, and Telegram alerts to create a stable, low-touch workflow that saves significant marketer time every week and keeps the content pipeline consistently filled.

I designed and built an end-to-end content automation system that turns fresh AI industry news into ready-to-review social media drafts for LinkedIn, X, and Threads.

The goal was simple: make sure the marketing team always had a steady flow of relevant, timely content every week without spending hours searching for topics, reading articles, and drafting posts manually.

The main challenge was to create a reliable system that could continuously supply fresh content ideas and draft posts for multiple social media channels.

Before this workflow, marketers had to:

This process took too much time and made content production inconsistent.

I created a multi-step automation system in n8n that monitors fresh AI-related news, filters it, extracts key facts, scores relevance, generates social media drafts, and sends only the best results into a final posting table.

The system combines:

I started by creating targeted Google Alerts queries focused on AI applications and related topics.

These alerts generated RSS feeds, which were then monitored by n8n every morning.

At the same time, a separate workflow parsed selected Reddit threads to capture additional relevant discussions and ideas.

When new items appeared, the system checked them in two stages:

Relevant items were saved into a source table.

Another workflow then visited each source article, parsed the actual page content, and extracted the core facts from the full text.

This step turned long-form articles into short, structured summaries that could be reused later in content generation.

The summarized news items were then scored on a 1 to 10 relevance scale based on how well they fit the brand’s content goals.

These scores were written back into the table, making it easy to track what was worth using.

Only items with higher scores moved forward.

From there, the system generated:

This stage was not a single prompt.

I split it into multiple steps:

All generated versions were written into structured tables for review.

A final scoring workflow reviewed the generated posts and evaluated their quality.

Only posts that scored 7 to 10 were transferred into the final posting table.

From the final table, approved content could then be sent through Make and Buffer for publication across social channels.

This meant the system did not publish raw output directly.

It prepared reviewed, structured drafts and moved only strong candidates into the final posting stage.

I designed the workflows as separate time-based automations, so one failure would not break the whole system.

If one workflow failed because of rate limits, parsing errors, or server-side issues, the rest of the pipeline could continue running independently.

I also set up:

This made the system easy to monitor and maintain.

This automation created a reliable weekly content pipeline and significantly reduced manual work for the marketing team.

I estimate the system saved the marketer at least 7 hours per week, mainly by eliminating manual research, summarizing, and first-draft writing.

Instead of creating content from scratch every time, the marketer now reviews prepared drafts once a week, makes edits where needed, and moves forward with publishing.

That drastically reduced repetitive work while still keeping human control over the final quality.

End-to-end System Architect & Builder.

I designed the workflow logic, built the automation architecture, connected the tools, created the filtering and scoring stages, structured the data flow through tables, added monitoring and alerts, and made the overall system resilient enough for daily use.

Three nearby case studies worth reading next.

Apr 10, 2026

An n8n workflow that searches fresh LinkedIn vacancies, checks companies against the IND recognized sponsor register, removes duplicates, and writes application-ready leads into Google Sheets.

Apr 3, 2026

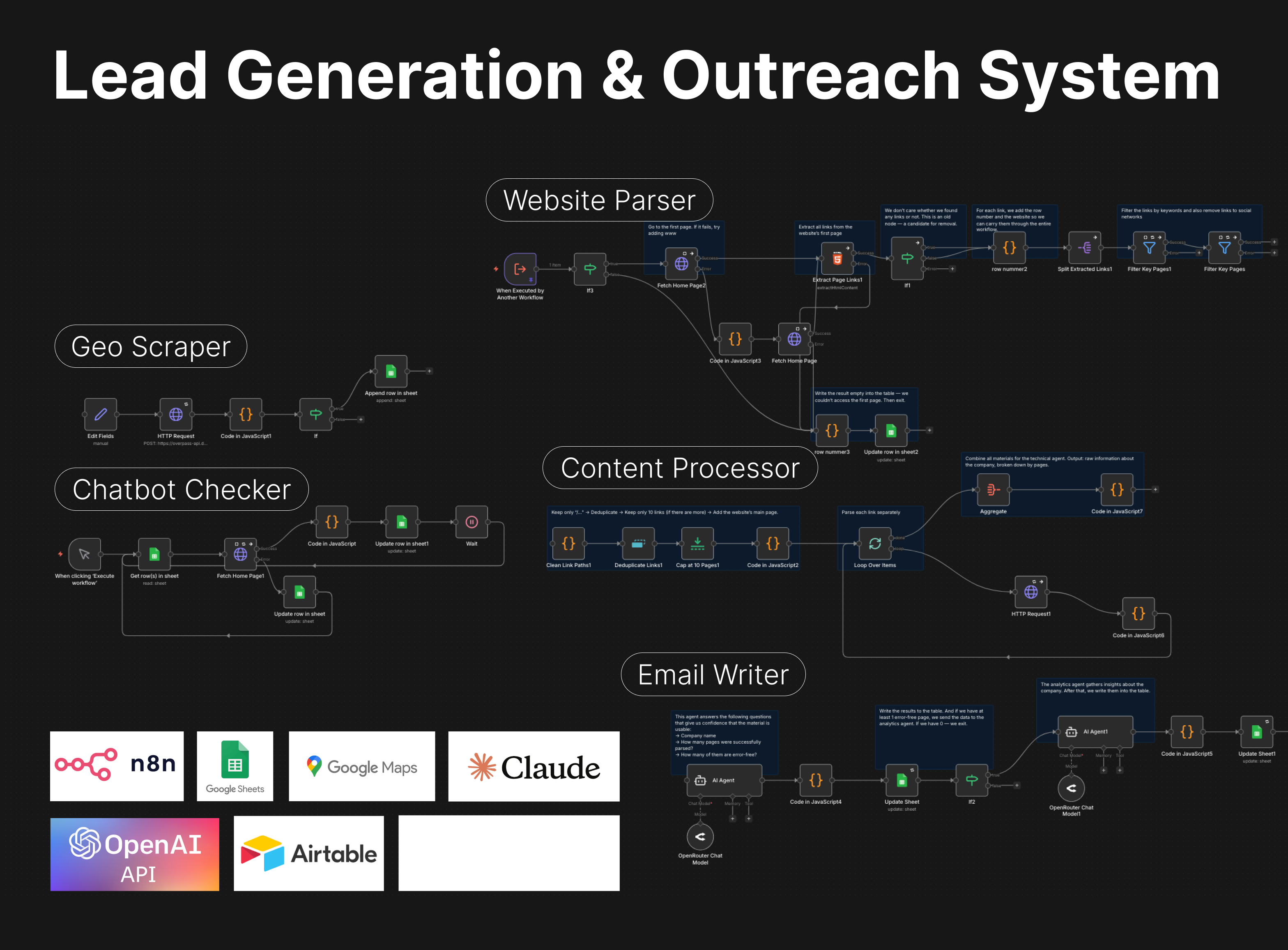

A Slack-controlled prospecting system that collects leads, analyzes websites, qualifies opportunities, and generates personalized outreach emails with minimal manual work.

Apr 4, 2026

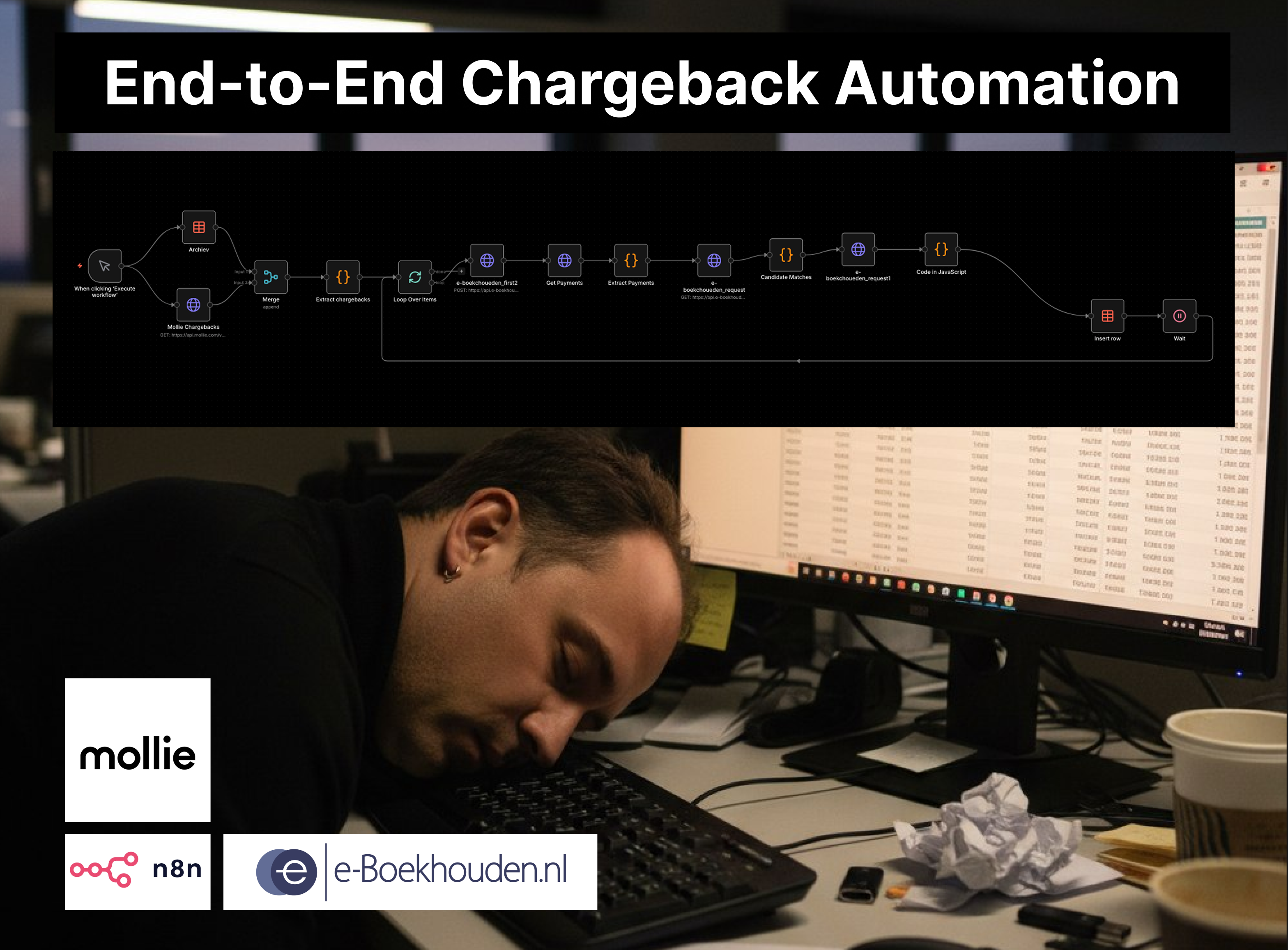

A two-workflow n8n system that matches Mollie chargebacks to the correct e-Boekhouden mutations and creates the accounting entries automatically.

If you have a manual workflow between tools, I can help map the logic, design the system, and automate it in a way your team can actually use.