I won’t read this — for me it’s just straight-up awful AI.

Yesterday I experienced a cognitive bias.

Context: I built an AI that writes new texts based on my previous ones. And for the first time, I actually liked the result and published it without edits. I felt really inspired. Finally!

I showed it to a marketer I know, and she said: “I won’t read this — for me it’s just awful AI.”

It felt like that dress experiment. I said: “It’s black.” She said: “It’s gold.” I look at it — no, it’s black. She goes: “But all the signs show it’s gold!”

And I started thinking: yes, we perceive content differently. But are there really clear signs of AI that a human couldn’t also use? Are there actually bad texts, or just texts that didn’t work?

The question isn’t whether the text is good or bad. The question is whether identifying a text as AI-generated is really that simple.

I remember there was a time when photographers overused filters and made skin look too smooth. I was among those who preferred a natural look, and it really bothered me when I saw excessive editing. But clients wanted it — they liked it, they admired those filters.

Cognitive biases. How do you deal with this?

Three nearby posts worth opening next.

Apr 12, 2026

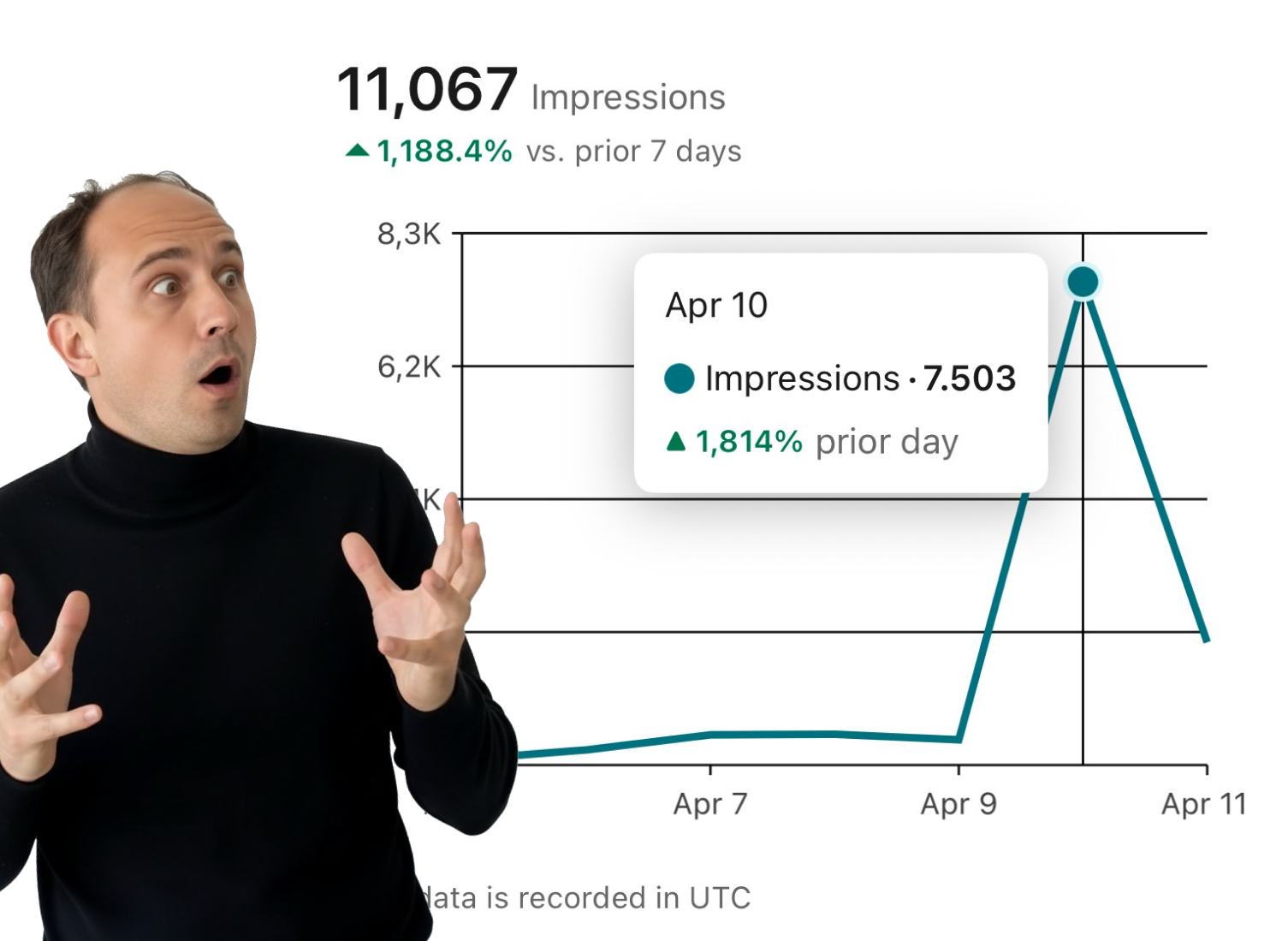

A reach spike feels good for a moment, but the real question is what remains after the numbers fall back down.

Mar 29, 2026

The subscription price is only part of the equation. Review time, rewrites, and subsidized pricing change the picture fast.

Mar 25, 2026

A note on emotional triggers, mechanical engagement, and whether human writing still outperforms clean content systems.

If you have a manual workflow between tools, I can help map the logic, design the system, and automate it in a way your team can actually use.